US AI Regulation FAQ: Corporate Governance for Foreign Tech Companies

- AI risk management is a core fiduciary duty under US corporate governance standards.

- Foreign tech companies operating in the US must maintain audit-ready documentation aligned with federal frameworks.

- Failing to prove the legal origin of training data triggers federal enforcement and algorithmic disgorgement.

- Boards must formally assign human accountability for automated decision-making systems.

Required AI Risk Management Documentation

Foreign tech companies operating in the US must maintain algorithmic impact assessments, data provenance logs, model system cards, and AI incident response plans. These documents prove your board oversees AI risks and meets US market standards.

The NIST Artificial Intelligence Risk Management Framework (AI RMF) is the baseline for what US regulators expect in a company's corporate records. Required documentation includes:

- Algorithmic Impact Assessments (AIA): Formal reports evaluating how AI systems affect US consumers. They must analyze bias, privacy, and security risks.

- Data Provenance Records: Detailed logs documenting exactly where training data was sourced. These records must explain how consent was obtained and list the terms governing data use.

- Model and System Cards: Standardized documents summarizing a machine learning model's performance, intended use, and known limitations.

- Board Oversight Charters: Corporate resolutions and committee charters delegating AI risk management duties to specific executives.

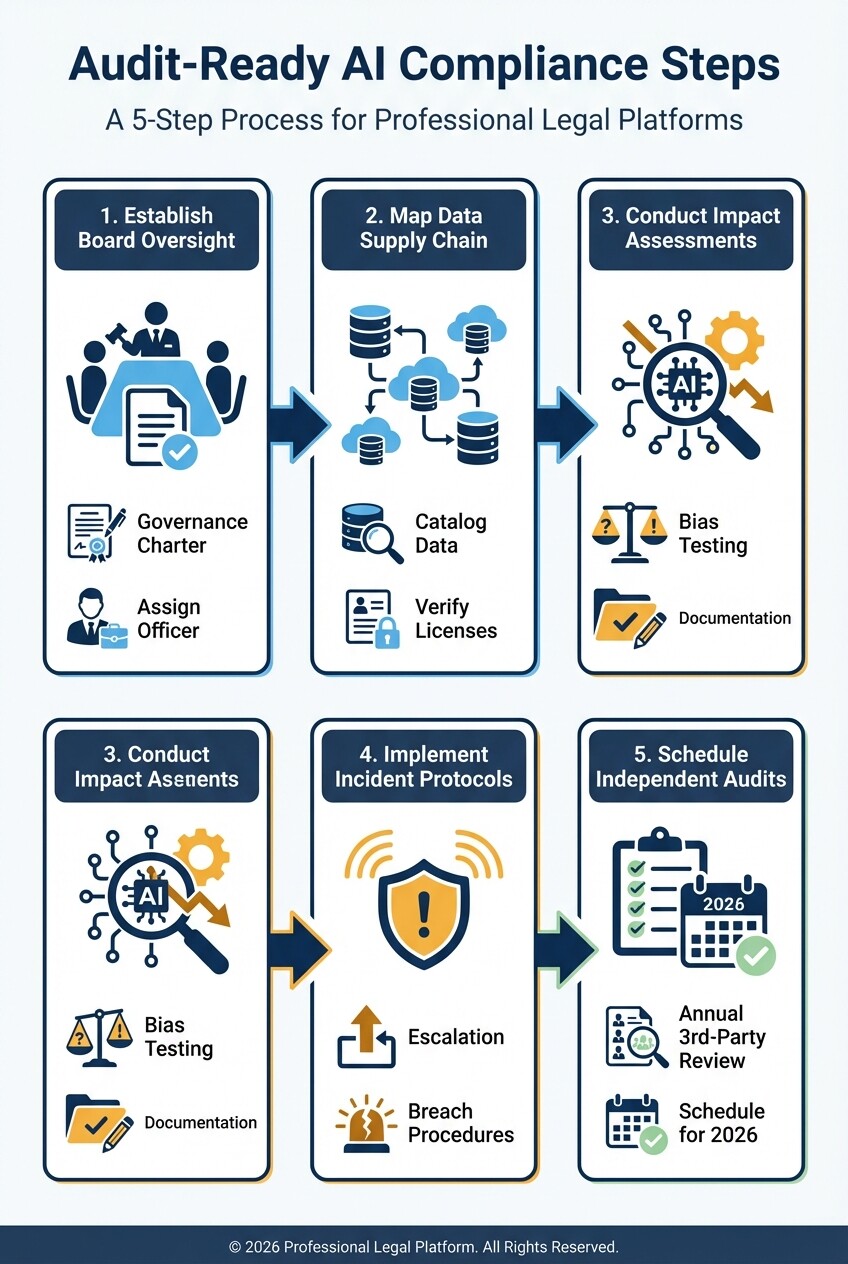

Step-by-Step Checklist for Audit-Ready AI Compliance

Audit-ready compliance requires logging how you train, deploy, and monitor AI models. Use this checklist to establish a compliance baseline before entering the US market:

- Establish Board-Level Oversight

- Draft and adopt an AI Governance Charter.

- Assign a specific officer to lead AI risk management.

- Mandate quarterly AI risk reports in board meeting minutes.

- Map the Data Supply Chain

- Catalog all internal and external data sources used for model training.

- Verify and save copies of third-party licensing agreements.

- Document opt-out mechanisms provided to US consumers.

- Conduct Impact Assessments

- Run bias and fairness testing before deploying new models.

- Document the testing methodology and evaluated demographic groups.

- Log remediation steps taken when biases appear.

- Implement Incident Protocols

- Create a procedure for handling AI hallucinations or data breaches.

- Define the board escalation path for high-severity incidents.

- Draft notification templates for US regulators and affected consumers.

- Schedule Independent Audits

- Retain third-party algorithmic auditors annually.

- Archive auditor findings alongside internal remediation plans.

Structuring Corporate Governance Policies

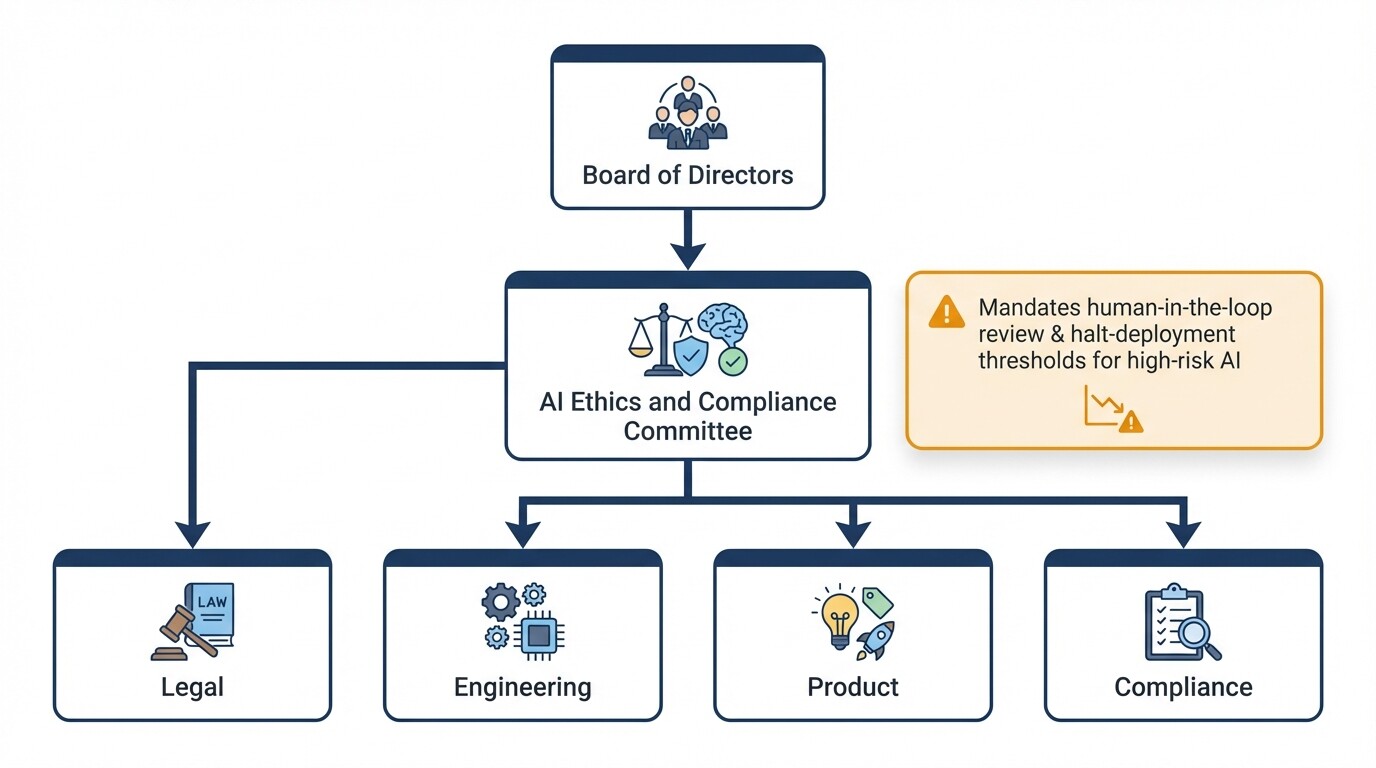

You must integrate AI oversight directly into your existing corporate governance hierarchy, starting with the board of directors. Your framework must assign clear human accountability for automated decision-making.

Foreign companies must adapt their organizational structures to match the compliance standards required of US domestic corporations. Establish an AI Ethics and Compliance Committee that reports directly to the board. Include representatives from legal, engineering, product, and compliance teams.

Internal policies must require mandatory human-in-the-loop review processes for high-risk AI applications in employment, credit, or healthcare. Policies must also define the thresholds that trigger a mandatory halt to AI deployment if risks exceed corporate limits.

Common Mistakes with AI Data Supply Chains

Founders frequently assume offshore data collection shields them from US liability. The Federal Trade Commission (FTC) enforces strict transparency regardless of where training data was scraped or purchased.

Common compliance mistakes include:

- Black box third-party vendors: Purchasing datasets or foundational models without demanding indemnification or provenance logs exposes you to liability. If a vendor's data violates US privacy laws, the company deploying the AI in the US is liable.

- Assuming anonymized data is exempt: Stripping names from datasets does not remove compliance obligations. US regulators view data that can be re-identified through AI synthesis as protected personal information.

- Missing consent documentation: Scraping public data in foreign jurisdictions does not make it legal for commercial AI products targeting US consumers. Failing to document consumer consent management triggers regulatory audits.

Frequently Asked Questions About Cross-Border Liability

Are foreign companies liable for AI bias under US law?

If a foreign company's AI system interacts with US consumers, makes decisions affecting US residents, or impacts US markets, the company is fully subject to US anti-discrimination and consumer protection laws.

Do we need a US-based AI compliance officer?

Federal law does not strictly mandate that your compliance officer reside in the United States. However, you must designate a corporate officer who is familiar with US federal guidelines and accessible to US regulatory agencies.

What is the penalty for failing to maintain AI provenance records?

The FTC uses algorithmic disgorgement to force companies to permanently delete improperly sourced data along with the entire AI model trained on that data. Companies also face civil financial penalties.

When to Hire a Lawyer

Retain US legal counsel before launching an AI product in the US market, scraping data for model training, or entering into data licensing agreements. Partnering with experienced US corporate governance lawyers ensures your board resolutions, data supply chain contracts, and compliance charters can withstand regulatory scrutiny.

Next Steps

Begin by conducting an audit of your existing data practices using the checklist in this guide. Update your corporate board charters to explicitly include AI risk oversight, and initiate algorithmic impact assessments for any AI products currently accessible to US consumers to identify vulnerabilities before regulators intervene.